Ending a calendar year provides a great opportunity to officially close the book on a specific period of time and review what has happened. We’ve grown accustomed to seeing annual reviews of the things that have shaped a year as it closes, but we get the opportunity to reflect on an entire decade much less frequently. There are all sorts of reviews appearing that highlight the last decade, and it’s amazing how fast one can forget key events that have happened. Indeed, when I present on weather trends in the insurance industry, I often suggest that our weather memory is generational, and we really only remember the last impactful event. In reality, however, our weather memory is likely even shorter than that. Therefore, I want to take this opportunity to review what I think are the biggest events that have shaped the catastrophe analytics industry over the last decade. Clearly, this is relative, as there is an almost endless list of events that could be labeled as local industry-changing. From my point of view, though, there are just a handful of key events that will have lasting effects into the next decade for the insurance/reinsurance catastrophe analytics industry. Before I highlight my top event, let’s look at some of the other contenders.

Earthquakes

The decade began very actively with major earthquake events around the world. Just under two weeks into the new decade, a 7.0-magnitude earthquake struck Haiti on January 12, 2010 and quickly became a humanitarian disaster with a death toll reaching over 230,000. Just 46 days later, an 8.8-magnitude earthquake hit Chile on February 27. A few months later and halfway around the world, a 7.1-magnitude earthquake struck near Darfield in the Canterbury region of New Zealand on September 4 and was followed by a series of large aftershocks that changed nearby Christchurch forever. A few months later on March 11, 2011, nearly 16,000 people lost their lives from the Tohoku 9.1 earthquake and resultant deadly tsunami, which still gives me chills when I remember watching the live videos unfold that day on TV. There have been many other large earthquakes as well, such as Napa, CA (2014), Nepal (2015), and Italy (2016), all of which had a large influence on the insurance industry. These earthquakes also impacted catastrophe model updates, not only regionally, but also across the globe, as they provided excellent learning opportunities and new information related to engineering, aftershocks, liquefaction and multi-fault raptures. Catastrophe modeling companies have been able to use lessons from these events to update key components in U.S. earthquake models, despite not having a lot of resources dedicated to researching earthquakes in the U.S.

Severe Weather

The decade also kicked off with another string of events that seemed to come out of nowhere and can change the catastrophe analytics industry in the blink of an eye. The U.S. severe weather season was active in early 2011 with two key events: 1) the EF4 tornado that struck Tuscaloosa and Birmingham, Alabama on April 27, and 2) the EF5 tornado that struck Joplin, Missouri on May 22. That 2011 severe weather season still stands out in terms of the insured loss and the number of severe weather observations. These events were likely the final straw for the various catastrophe modeling companies, as they soon upgraded their older severe thunderstorm models with pretty drastic changes. In fact, prior to 2011, many insurance companies largely disregarded the catastrophe modeling output. However, with the newer models we currently have, the output is actually being utilized by insurance companies in many different facets of their business. The 2011 tornadoes also illustrated the importance of understanding the increased risks associated with the growth that is occurring in many urban areas around the world. These events are useful in deterministic event analysis to see what might happen if a Joplin-type event hit an area of high exposure concentration.

Non-Hurricane Landfalls

In the early part of the decade, another large event occurred that had far-reaching effects. After threatening much of the U.S. East Coast, Superstorm Sandy eventually made landfall along the New Jersey coastline on October 29, 2012. With over 60 million people affected, this storm taught many lessons to inhabitants in an area of the coastline that has had relatively few large named storms make landfall in the past. You may remember that this storm prompted many discussions around whether it was, in fact, a hurricane. How that uncertainty influenced hurricane deductibles was very interesting. It highlighted issues with coastal storm surge and rainfall in highly developed areas, prolonged business interruption, and how contents and components of critical infrastructure, such as servers and electrical systems, are modeled.

Wildfires

In reviewing the last decade, the devastating wildfires that occurred, starting with the Fort McMurry, Alberta, Canada wildfire on May 3, 2016, warrant mention. Soon thereafter, we saw a rare southeast wildfire hit Gatlinburg, TN in November of 2016. These events were the precursor to several major wildfires that impacted California and brought much-needed change to outdated catastrophe models to address this costly new peril. We also saw new burdens placed on policyholders as a result of these events, such as non-renewal notices and state mandates. As rolling power outages became more common, they brought new attention to business interruption insurance. The wildfire risk continues to grow around the world, and the lessons we’ve learned thus far are changing how this risk will be viewed in the future, with much more focus on defensible space and overall accumulation of risk in any one given wildfire zone or Wildland Urban Interface (WUI).

New Risks

An interesting aspect of looking at catastrophic losses over a decade is that new trends can be observed. When thinking back to 2010, many in the insurance industry thought the word “cyber” was about computer networks or virtual reality. In just a decade, however, a new industry has been born, which will likely continue to grow in the future. We are just at the forefront of new product offerings and understanding the risk associated with cyber loss, with new cyber models now becoming another tool catastrophe analytics can incorporate into their daily management of risk.

Floods

Lastly, a review of the decade would not be complete without thinking about the countless major flooding events that have impacted the world over the last decade, many of which were labeled 1,000 year flood/rainfall events. This is likely the hardest list to narrow down, but I think two events are the most prominent. The 2011 Southeast Asian floods stand out as major industry events because they were a classic known/unknown risk with many new factories being built on known flood plains, but no catastrophic hazard models to understand such risk. The losses were compounded with contingent business interruption losses after the Japan Earthquake forced suppliers to relocate from Japan to Southeast Asia. Clearly, these circumstances provided far-reaching opportunities to better understand worldwide flood risk. Hurricane Harvey’s (2017) wind-related losses pale in comparison to its flood-related losses. As flood is typically not a covered peril in private homeowner’s policies, the large majority of Harvey’s impacts were uninsured, illustrating that there is a large protection gap even in the U.S. Commercial and automobile flood losses still made Harvey a major event for certain segments of the market, however. The flooding likely also put stress on the catastrophe analytics industry to invest more time and resources into understanding the peril. However, Hurricane Harvey had an even greater influence, which brings me to the most impactful event to the catastrophe analytics industry over the last decade.

The Top Event

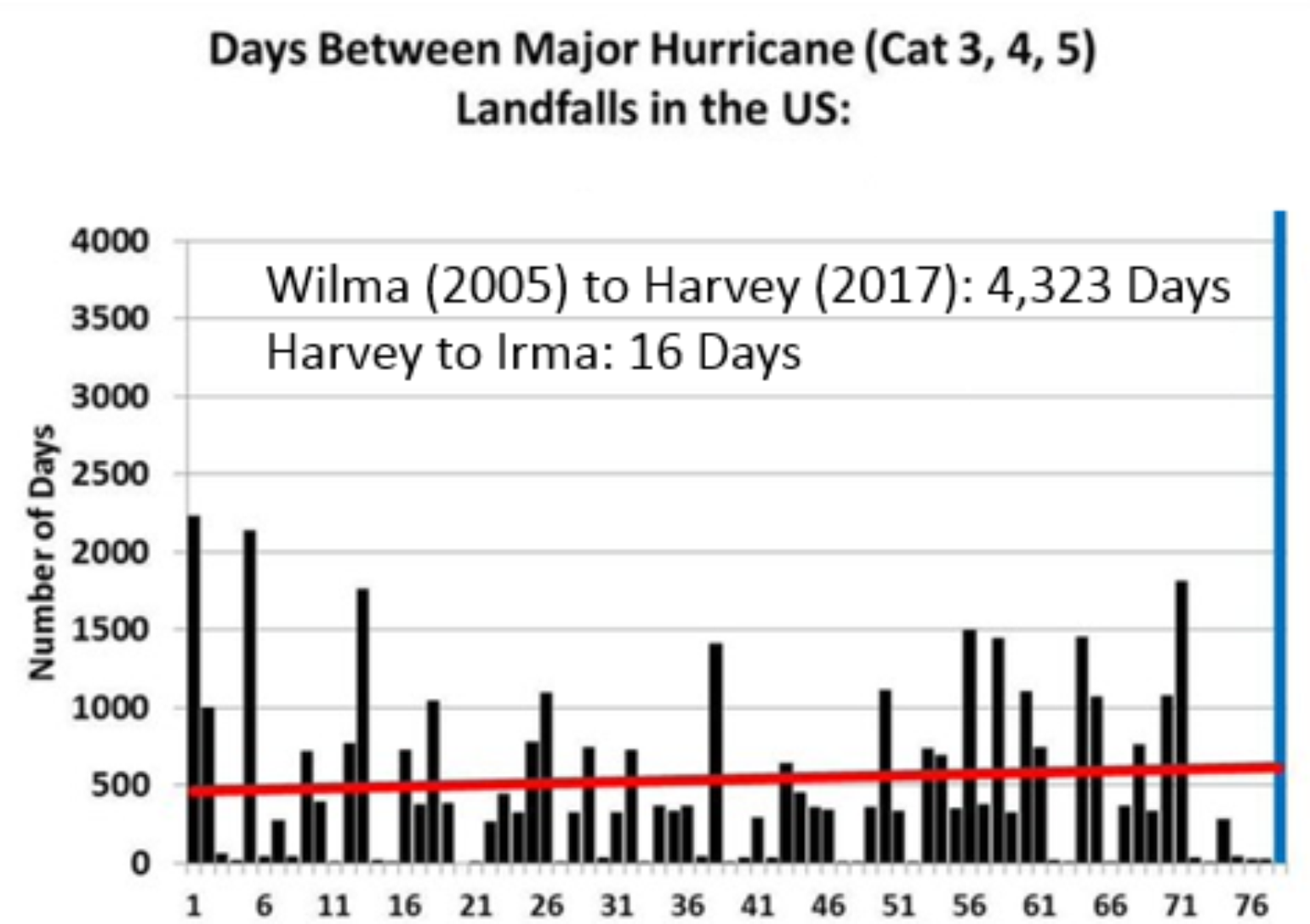

For much of the last decade, the continuation of the U.S. major landfalling hurricane drought and the quick revision to the mean with HIM FM landfalls (Harvey, Irma, Maria in 2017 and Florence and Michael in 2018) was unprecedented and had far-reaching effects across the global insurance industry. HIM FM provided valuable new data sets for the catastrophe risk models, yet also had individual influences on the catastrophe analytics industry. In the 170 years of landfall records, never had there been an extended period lasting this long without a major hurricane landfall. Twenty-seven major hurricanes occurred in the Atlantic Ocean basin since the last major hurricane, Wilma, struck Florida in 2005. The odds of this are 1 in 2,300, according to Phil Klotzbach at Colorado State University.

However, compared to the earthquakes, this return period is nothing, so why is this the top event for the decade one might ask? There are too many factors to discuss in this short BMS Insight, but, when you think about the startling lack of hurricanes after the 2004 and 2005 hurricane seasons when catastrophe modeling companies and the insurance industry were trying to gain a better understanding of the frequency and severity of these storms, the impacts were far-reaching. As a result of the major landfall activity after the 2004 and 2005 hurricane season, some in the insurance industry took drastic steps, and catastrophe modeling companies implemented rate changes that would cost the industry billions of dollars in increased reinsurance spending over the following years that experienced no landfalls. In the first part of the decade, however, without any major U.S. landfalling hurricanes and global capital realizing that catastrophe risk is not correlated with the global economic cycle, capital flooded into the catastrophe markets and resulted in falling reinsurance prices. At the same time, the population of coastal areas exposed to hurricanes continued to increase, and complacency set in among the insurance industry and the general public, limiting disaster resiliency.

So, 10 years ago, the insurance industry was coming out of a decade of an unprecedented series of hurricane strikes and reeling from high insurance and reinsurance rates. No one at the time could predict that the industry would be granted an unprecedented, decade-long reprieve by Mother Nature, while simultaneously enjoying the most favorable global reinsurance and catastrophe market in memory. When HIM FM finally broke the long-standing hurricane drought, it taught many new lessons to the insurance industry that will last well into the next decade. This, to me, is why the continuation and end of the hurricane drought was the most important event to the catastrophe modeling industry in the last decade.

The 2020’s

Taking the opportunity to look back at the last decade clearly shows there are cases where history can repeat itself. There are a great number of known unknown events that can impact the insurance industry. There are many current questions that could be addressed in the decade to come, like what will happen with climate change and how will the catastrophe models account for the near term and long terms trends that could start to emerge over the next decade. Maybe there will be rare perils that impact the insurance industry such as geomagnetic storms or maybe a major volcanic eruption near a large population center. Neither of these perils has a standard catastrophe model, but they are often covered insurance risks. One thing is certain there will be surprises over the next decade. The catastrophe analytics industry will continue to quantify the risks using new technologies which will help reduce uncertainty and maybe even create new markets, ultimately closing the protection gap around the world.